Were market studies effective in 2020?

When we post a study in our free reports or on social media, there is often a chorus of complaints along the lines of, "Why don't you show the full results?", or "Why are you always bearish?", or "Don't you know that this time is different?"

Well..

1) We're not going to post our full work for free, 2) We're not always - or even usually - bearish, and 3) Yes, this time is different, it always is.

There is always a narrative. But time and again, we've seen that relying on the history of human behavior as a base case is consistently more effective than betting on the exceptions.

There was an academic study published a few years ago that looked at the forecasting record of public market analysts. The inevitable conclusion was the average forecaster is right less than 50% of the time, so why bother.

These studies always ignore expectation and asymmetry. The winning percentage of a forecaster matters little if the relatively few winners more than make up for the multitude of losers. And a good forecaster with a high winning percentage can be a horrid person to follow if the few losers wipe away all the gains from the many winners. Asymmetry is the among most important aspects of determining the worth of a forecast, but that never seems to be addressed in takedowns of forecasting records.

For every study we do at SentimenTrader that has a solid conclusion, we track it in the Active Studies. Research that includes one of these compelling studies will have a forecast attached to it showing whether the forecast is for higher prices or lower prices, an ETF that tracks the appropriate market, and whether the time frame is short-term (< 1 month), medium-term (1-6 months) or long-term (> 6 months).

To remain accountable and transparent, after the studies’ effective time frames have passed, we go back and rate them based on whether they were a good guide or not, and they then show up in the Archived Studies. There are over 740 studies that have been rated.

Near the end of each year, we objectively review studies that were closed during the year. The ratings are based on how the indicated market performed over the time frame that was most consistent in the study. They range from 1 to 5 stars.

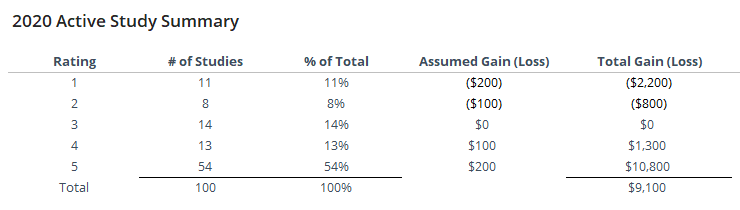

Below, we can see a breakdown of study results for 2020. Using a conservative rating approach, the studies continue to show a positive expectation. The results from this year were effective, and don't include a handful of studies that aren't yet graded (they are still active), almost all of which should end up being rated positively.

Assuming that we would have lost as much on the bad studies as we gained on the good ones – basically, that our stop losses were equal to our profit targets – then we would have made $9,100, or about a 91% return on the $10,000 total “invested” assuming we put $100 on the market for each of the 100 studies. That's about double the rate of return from other years.

This is not a trading system and would be hard or impossible to implement if it was. It’s just a shorthand way of trying to determine if the studies have been helpful over the years.

Many of the 5 star-rated studies were medium- to long-term ones that focused on stocks, thanks to extreme pessimism and then incredible momentum during the spring and summer months. We see this consistently - when there is a big cluster of studies pointing one direction or the other, in whatever market, they tend to be mostly accurate.

Below is a summary of study results for the past 3 years.

As long as the expectation of our studies continues to be consistently positive, then we feel there is value in analyzing markets this way. We think it's unique to our service since most others don't objectively track and grade their analysis, hoping nobody notices.

It's not always easy, because sometimes it doesn't work, and nobody likes to admit failure. But acknowledging the difficulties and looking at things the way they are instead of how we want them to be is a good way to improve, and that's something we're always looking to do.